As a Digital Performance Analyst, it’s my job to use analysis to show how the digital products and services in DWP are adding value and meeting user needs. I work alongside delivery teams to identify their service’s Key Performance Indicators (KPIs) and uncover ways that they can measure these goals. By using data and analytics, I provide insights on behaviours and trends to inform user centred designs and improvements to the services.

At the start of 2020, I began working to support the New Style JSA team. The service recently launched into Public Beta during the COVID-19 lockdown.

Supporting a digital service team

From early conversations with the team, one of the challenges they were faced with was a lack of evidence and rationale behind some of the key decisions and designs that had been made by the previous team working on the service.

To overcome this, the team set out to complete a review of the design, content and user research of the service. This developed the team’s understanding of the user needs, the business context and some of the constraints they would have to work with.

Building on this knowledge, I worked with the team to form their service’s measurement framework. We started with user needs, explored what success and failure would look like for the service and from there discussed what the success factors and KPIs should be.

Having different multidisciplinary people in the conversation really helped as we were able to cover viewpoints from user, business and data perspectives. As we finalised the framework to a ready state, the team had a better understanding of what we needed to measure, why it was important and how they were going to source this data.

“It’s so great to have data”

We then used this framework to help us plan and prioritise which metrics needed to be measured first. We worked together to have Google Analytics enabled on the service alongside a compliant cookie consent mechanism.

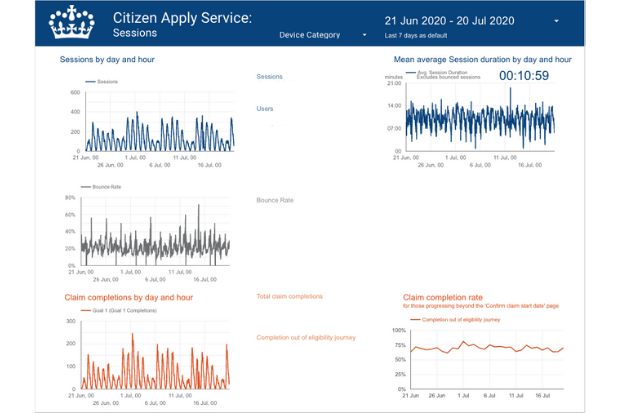

Once in place, dashboards were then created to give the service team visibility of the key measures and data that could be sourced from Google Analytics.

I worked with the team on the changes and iterations they had made to their service. Using analytics data, I was able to provide robust analysis and statistically sound insights on what had improved, how it had improved and whether further recommendations or iterations were needed.

It was great to see the team becoming more engaged and understand the benefits of using data. We’re now working together on further hypotheses and iterations in the backlog, so it’ll be exciting to see what happens next.

Telling the service’s story with data

As with most Digital Government services, the service assessment is typically the milestone to share what has been done by the team in the phase they’ve complete.

At the New Style JSA GDS Beta assessment, the team were able to tell a great story. They shared the hypotheses they worked from and showed the iterations they had made, along with the improvement and change that could be seen using both quantitative data and qualitative research.

By using data to validate the successes and failures of the changes and improvements that had been made, the team were able to demonstrate their learnings.

As the Digital Performance Analyst supporting the service, I feel proud to have been able to encourage and help the team to use data evidence to make effective decisions that have improved their service for users.

Of course, the cherry on the cake was the service getting a “Met” result from the assessment. Officially this means the service can now continue to the next phase of development in Public Beta. To me it’s validation (and a confidence boost) that our peers in government can see how the service has been built with the Service Standard in mind.

7 comments

Comment by Dan Prendergast posted on

Great work Jenny! The framework you've used, starting from user-needs to drive goals and metrics, makes complete sense. Nice to see a real balance of quant and qual research being applied to a positive outcome - from experience; it can be a real challenge getting that balance just right.

Comment by Jenny Murray posted on

Thanks Dan! I agree, it's definitely can be hard to balance out qualitative and quantitive research but I think it's an important challenge to work through as part of user centred design.

Comment by Jane Hall posted on

Great blog post, Jenny. Good to see you still having an impact!

Comment by Jenny Murray posted on

Thanks Jane 🙂

Comment by Sarah-Jane Moldenhauer posted on

One thing I will agree with is that analytics can help a person understand what content needs to be tweaked and looked at, helps with making better decisions and improves productivity. However, analytics also has to take into account that certain topics will garner more attention than others, so it is wrong to let analytics drive all the decisions for the content. Writing for a person is a lot more satisfying, than blindly on numbers alone, and Google agrees.

I have only ever used analytics to help me in deciding which content needs to be looked at (in the past- 10 years ago). Ultimately, my instinct and experience gained from participation in online communities, installing PHP scripts on my own shared hosted domains, and writing content, trumps trusting blind numbers any day. So, when it came to websites, analytics only helped with some decisions. Saying that, I didn't have to impress shareholders with analytics or anything, and perhaps that is what analytics is in this day and age. Carefully crafted content meant for manipulation without considering the 'why' behind the action. Analytics show us what users do, not 'why' they do it. That is why Google favours actual content to be ranked these days which helps people. While retail sites have to battle it out, and gain favour by paying, the organic site with relevant information geared towards their ideal market, will win a lot more in the long run.

I only have to look at my own name in Google, realising that I rank on the first two pages of Google.com almost exclusively. I didn't use analytics and I didn't manipulate trends or keywords either. I was just being my 'authentic self.' 🙂

Comment by Jenny Murray posted on

Thanks for sharing your experience, Sarah. Completely agree trusting blind numbers alone is never the right approach. We are really lucky at DWP Digital to be able to work with some really great User Researchers who focus on the qualitative research to understand the “Why”.

I think understanding both the quantitative and qualitative sides are equally really key to making better decisions.

Comment by Sarah-Jane Moldenhauer posted on

You are right. The DWP Digital is lucky to have a lot of experienced people working together that ensure the right content is seen by the right people. 🙂